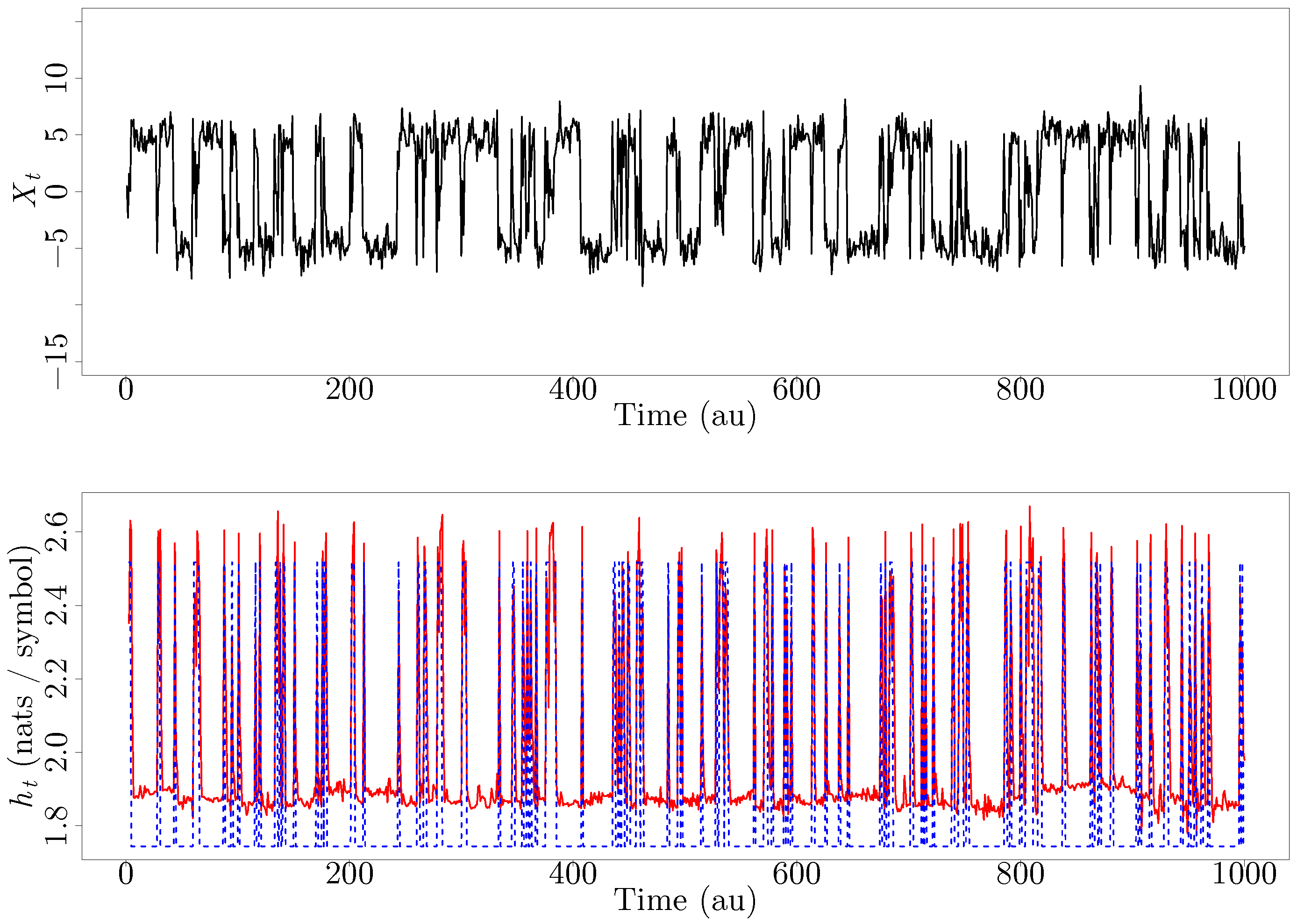

Here we further explore the nature of this state function and define it mathematically. In Chapter 13, we introduced the concept of entropy in relation to solution formation. To help explain why these phenomena proceed spontaneously in only one direction requires an additional state function called entropy (S), a thermodynamic property of all substances that is proportional to their degree of "disorder". Moreover, the molecules of a gas remain evenly distributed throughout the entire volume of a glass bulb and never spontaneously assemble in only one portion of the available volume. The number of available microstates increases when matter becomes more dispersed, such as when a liquid changes into a gas or when a gas is expanded at constant temperature. For example, after a cube of sugar has dissolved in a glass of water so that the sucrose molecules are uniformly dispersed in a dilute solution, they never spontaneously come back together in solution to form a sugar cube. According to the Boltzmann equation, entropy is a measure of the number of microstates available to a system.

The first measure, substructure entropy, describes the number of bits needed to. For a full video: see Thus enthalpy is not the only factor that determines whether a process is spontaneous. two measures of graph regularity, using concepts from information theory.

Simpsons and the Berger Parker diversity. When water is placed on a block of wood under the flask, the highly endothermic reaction that takes place in the flask freezes water that has been placed under the beaker, so the flask becomes frozen to the wood. It is a measure of the computational resources needed to specify the object, and is also known as algorithmic complexity, SolomonoffKolmogorovChaitin complexity, program-size complexity, descriptive complexity, or algorithmic entropy. ecology, whereby species counts are used as a measure of diversity. The reaction of barium hydroxide with ammonium thiocyanate is spontaneous but highly endothermic, so water, one product of the reaction, quickly freezes into slush. An entropy change of a system is equal to the amount of heat transferred (Qrev) to it in a. Sources: NIST SP 800-133 Rev.\): An Endothermic Reaction. Entropy is a measure of molecular disorder or randomness. Sources: NIST SP 800-63-3 A measure of the disorder, randomness, or variability in a closed system see SP 800-90B. Entropy can measure a brain's computational. A value having n bits of entropy has the same degree of uncertainty as a uniformly distributed n-bit random value. Entropy measures the amount of energy unavailable for work, the amount of disorder in a system, or the uncertainty regarding a signal's message. 1 NIST SP 800-90B A measure of the amount of uncertainty an attacker faces to determine the value of a secret. Min-entropy is the measure used in this Recommendation. Sources: NIST SP 800-63-3 A measure of the disorder, randomness or variability in a closed system. A value havingnbits of entropy has the same degree of uncertainty as a uniformly distributedn-bit random value.

The term Entropy was introduced as a State Function to check whether the reaction is possible or not. And when you go to think, about how one would measure randomness, you get no answer. 1a A measure of the amount of uncertainty an attacker faces to determine the value of a secret. The definition itself is hard to comprehend at times. The entropy of uncertainty of a random variable X with probabilities pi, …, pn is defined to be H(X)=-∑_(i=1)^n 〖p_i log〖 p〗_i 〗 Sources: NIST SP 800-22 Rev. A measure of the disorder or randomness in a closed system.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed